The Role of Computer Vision in Autonomous Vehicles

In today’s rapidly progressing automotive industry, computer vision stands out as a transformative technology that is revolutionizing how vehicles perceive and interact with their environment. This sophisticated set of algorithms and systems enables cars to analyze their surroundings with extraordinary accuracy, thereby paving the way for true autonomy. As manufacturers race to integrate these capabilities into their fleets, the implications for the future of transportation become increasingly profound.

One of the pivotal breakthroughs is object recognition, which allows vehicles to distinguish between various elements in their vicinity. Imagine a car equipped with a comprehensive suite of cameras and sensors that can detect pedestrians crossing the road, cyclists maneuvering nearby, and important road signs signaling turns or speed limits. For instance, companies like Waymo and Tesla employ advanced neural networks that continually refine their ability to identify and predict the movements of different objects. This technology is not merely a convenience; it is essential for ensuring safe navigation in complex urban environments.

Another cornerstone of computer vision is real-time processing. Autonomous vehicles must analyze vast amounts of data instantaneously from their sensors. The ability to make split-second decisions based on live data is crucial for avoiding accidents and ensuring passenger safety. With advancements in processing power and algorithms, today’s autonomous systems can interpret and respond to potential hazards faster than any human driver. For example, if a car detects a child suddenly running into the street, its computer vision system must instantly assess the situation and activate braking mechanisms, a feat accomplished in milliseconds.

The creation of environmental mapping is yet another significant aspect. Autonomous vehicles utilize sophisticated 3D mapping techniques to create an intricate representation of their surroundings. Think of this as giving the vehicle a ‘mental map’ that allows it to navigate efficiently without needing constant GPS input. Companies are increasingly employing technologies like LiDAR (Light Detection and Ranging) to produce high-resolution maps that account for dynamic elements, allowing the vehicle to update its understanding in real time.

Despite the promising potential of these technologies, several formidable challenges stand in the way of widespread adoption. One critical issue is data privacy. As vehicles collect vast amounts of visual data, concerns about how this information is used and protected arise. Regulatory bodies may need to establish clear guidelines to address ethical concerns associated with surveillance and data security.

Moreover, computer vision’s performance can be significantly impacted by weather dependency. Adverse conditions such as heavy rain, fog, or snow can obscure camera lenses and sensor readings, leading to misinterpretations of surroundings. For instance, during a downpour, visibility can drop dramatically, and current systems may struggle to detect critical objects accurately, which raises safety concerns.

Another hurdle is navigating regulatory frameworks. Each state in the U.S. has its own set of rules governing autonomous vehicles, which can create complications for developers seeking to deploy their technology across multiple regions. For example, Arizona has been known for its welcoming stance towards testing self-driving cars, while California imposes stricter regulations, demanding extensive reporting and oversight. Finding a balanced legal path forward will be crucial for the successful integration of autonomous vehicles into daily life.

As we look to the future, the intersection of computer vision technologies and autonomous vehicles appears rich with possibilities. Continuous advancements in safety measures, including better sensor technologies and machine learning capabilities, could revolutionize not only individual travel experiences but urban planning and public transportation systems as well. The continuous quest for improved safety and functionality reveals a landscape teeming with potential, urging us to consider how we will navigate this new frontier of mobility.

DISCOVER MORE: Click here to learn about machine learning in healthcare

Challenges in Implementing Computer Vision

The integration of computer vision technologies in autonomous vehicles is not without its hurdles. As promising as advancements in this domain may be, manufacturers face significant challenges that can impede the seamless rollout of self-driving cars onto the roads. The complexity of these obstacles necessitates a meticulous approach to innovation, ensuring that solutions not only enhance performance but also prioritize safety and efficacy.

One pressing challenge is the data volume generated by onboard cameras and sensors. Each second, a myriad of images and readings are captured, creating a vast sea of information that must be processed efficiently. This overwhelming influx raises concerns about real-time processing capabilities. To function effectively, autonomous systems need extraordinarily sophisticated algorithms that can extract relevant data from this clutter. According to research, the volume of data processed on a typical autonomous vehicle can exceed 1 terabyte per day. This immense demand puts pressure on computing resources and can lead to lagging responses during critical driving situations.

Furthermore, training datasets play a crucial role in the success of machine learning algorithms employed in computer vision. To achieve higher accuracy, these models require extensive and diverse datasets representative of real-world scenarios. However, curating such data poses challenges, as it must reflect a variety of conditions, including different lighting, weather, and unexpected roadway scenarios. Bridge these gaps, companies can turn to simulated environments, yet this method is not foolproof given the unpredictability of actual driving conditions.

- Environmental Variability: Variations in season and climate can make recognizing hazards much more challenging.

- Unexpected Road Scenarios: Obstacles such as construction zones or accidents require dynamic responses that may not be covered in traditional datasets.

- Human Behavior: Humans are unpredictable. Understanding how pedestrians or cyclists will behave is crucial yet challenging.

In addition to issues with data management and training, the integration of computer vision technology faces considerable hardware concerns. The reliance on high-quality sensors and cameras can lead to high production costs. The performance of these devices can also fluctuate based on their positioning and calibration, intensifying the need for rigorous testing protocols. Vehicles must be equipped with sensors that function effectively across a spectrum of environments and terrains, making the engineering of hardware solutions both complex and costly.

Moreover, the integration of computer vision systems extends beyond mere technical specifications; it intertwines with the user experience. Developers must consider how humans will interact with autonomous vehicles equipped with advanced technologies. For full autonomy to be accepted, a human-friendly interface is paramount. Consideration must also be given to how passengers feel about surrendering control to a machine; their comfort and understanding of the vehicle’s vision and decision-making processes are crucial for widespread acceptance.

As we continue to assess the remarkable innovations within autonomous vehicle systems, it is essential to address these challenges head-on. Solutions are being explored across the industry, from improved machine learning techniques to innovative hardware designs, all aimed at enhancing the reliable functionality of computer vision systems. The path towards fully autonomous vehicles requires navigating these obstacles with tenacity and insight, and it will be fascinating to witness the evolution of this technology in the years to come.

The Role of Computer Vision in Autonomous Vehicles

The integration of computer vision technology has revolutionized the landscape of autonomous vehicles, serving as a cornerstone for their operational framework. As these vehicles navigate complex environments, the ability to interpret visual data effectively cannot be overstated. Computer vision allows vehicles to recognize pedestrians, traffic signs, lane markings, and obstacles on the road, enhancing their situational awareness. This technology is equivalent to giving a car its own set of “eyes,” enabling it to make real-time decisions based on its surroundings.

Challenges of Implementing Computer Vision

Despite its tremendous advantages, the implementation of computer vision in autonomous vehicles is fraught with challenges. One significant hurdle is the variability of lighting conditions and weather, which can impede the accuracy of visual data interpretation. For instance, rain, fog, or glare from sunlight can obscure critical information, prompting the need for robust algorithms that can process images and identify objects under various conditions.Moreover, the complexity of real-world environments poses a significant challenge. Autonomous vehicles must be able to comprehend dynamic factors, such as the unpredictable behavior of other road users. This complexity forces developers to harness advanced machine learning techniques, ensuring that systems can continuously learn and adapt.

Innovations in Computer Vision Technologies

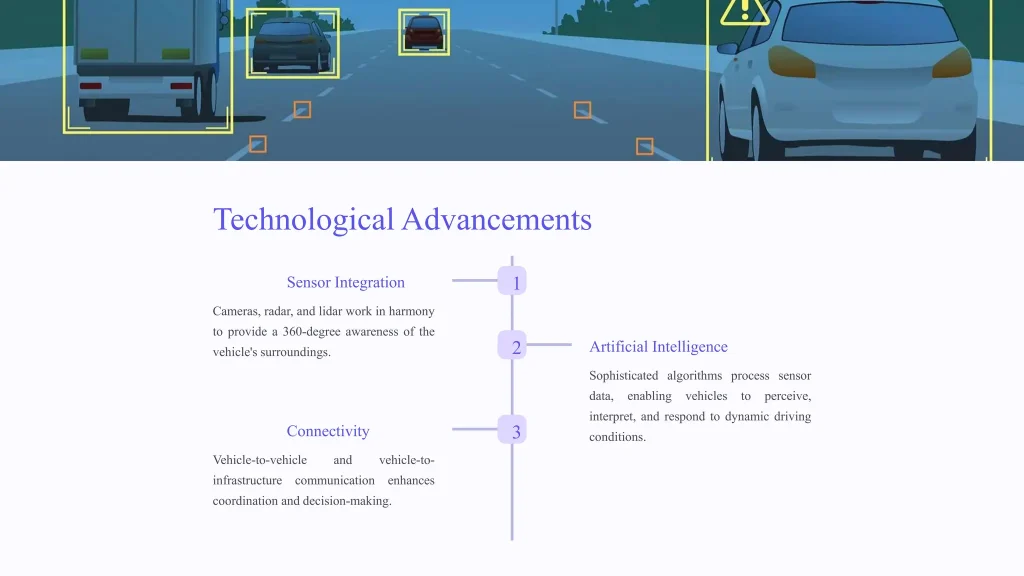

Recent innovations are paving the way for more effective integration of computer vision in autonomous vehicles. Techniques such as convolutional neural networks (CNNs) and deep learning have enhanced the performance of visual recognition systems. These technologies have the capacity to learn from vast amounts of data, improving their ability to identify and classify objects with unprecedented accuracy.Furthermore, the combination of computer vision with other sensor technologies, such as LIDAR and radar, is creating more comprehensive situational awareness systems. By synthesizing data from multiple sources, autonomous vehicles can build a more precise, 360-degree view of their environment, reducing the likelihood of errors in perception.

Future Prospects

As the development of autonomous vehicles continues, the focus on refining computer vision technology is set to intensify. The need for reliability, safety, and efficiency in autonomous driving will drive ongoing research and innovation. As companies and researchers work to overcome the current limitations, the potential for computer vision to transform the functionality of autonomous vehicles holds great promise, leading to safer and smarter transportation systems in the near future.

| Advantages | Key Features |

|---|---|

| Enhanced Safety | Real-time identification of potential hazards |

| Increased Efficiency | Optimized route planning and traffic management |

The integration of computer vision in autonomous vehicles is not merely a trend but a necessary evolution in the realm of transportation. By addressing existing challenges and embracing innovative technologies, the future of autonomous driving looks bright, poised to redefine how we understand mobility.

DISCOVER MORE: Click here to delve into the evolution of neural networks

Innovative Solutions to Overcome Challenges

In the face of these multifaceted challenges, the autonomous vehicle industry is not standing still. Innovators and engineers are tirelessly working on creative solutions to enhance the efficacy of computer vision technologies. These innovations offer promises not just to mitigate existing problems but also to pave the way for a safer and more efficient driving experience.

One of the groundbreaking advancements in computer vision is the adoption of deep learning algorithms. These algorithms enable vehicles to recognize and interpret their surroundings with increasing accuracy. By leveraging vast datasets from varied scenarios—ranging from urban streets to rural roads—deep learning can significantly improve an autonomous vehicle’s ability to understand context and predict human behaviors. This is crucial, particularly in urban settings where quick decision-making is required. Notably, companies like Tesla employ neural networks that continuously learn from real-world data collected by their fleet, enhancing performance in real-time.

The incorporation of sensor fusion is another vital innovation in the integration of computer vision. This approach involves combining data from multiple sources—such as LIDAR, radar, and infrared sensors—to create a more comprehensive understanding of the environment. Sensor fusion helps in mitigating some of the environmental challenges aforementioned, where certain sensors may struggle under adverse conditions, like heavy rain or fog. By relying on a combination of inputs, autonomous vehicles can cultivate greater resilience against such variances. According to industry studies, vehicles employing sensor fusion technology have demonstrated improved obstacle detection rates, reducing blind spots and enhancing safety on the roads.

Additionally, advancements in edge computing are revolutionizing real-time data processing. Instead of relying heavily on cloud-based systems that can introduce latency, edge computing allows for processing data directly within the vehicle. This reform reduces the lag time significantly, which is paramount for applications that require instant decision-making—such as recognizing pedestrians and reacting promptly. Furthermore, edge computing enhances data privacy as less sensitive information is transmitted over networks.

- Collaborative Learning: New strategies, such as federated learning, enable vehicles to learn from collective experiences without directly sharing the raw data. This can lead to improved models while maintaining user privacy.

- Advanced Simulation: Innovations in artificial intelligence have given rise to sophisticated simulators that create realistic driving scenarios, allowing for improved training datasets without the associated risks of on-road testing.

- Real-Time Update Mechanisms: Seamless OTA (Over-The-Air) updates allow manufacturers to deploy enhancements and corrections to vehicle systems, keeping the autonomous driving software continuously updated with the latest algorithms.

Regulatory frameworks are also evolving to support the integration of these advanced technologies. As government bodies and industry leaders interact more closely, new guidelines are being drafted that address the specific needs and challenges of autonomous systems. This symbiosis can facilitate faster approvals for emerging tech and provide a legal structure that bolsters consumer confidence in autonomous vehicles.

Meanwhile, manufacturers are increasingly focusing on enhancing the human-vehicle interaction experience to bolster user acceptance. Transparent communication of the operational capabilities and limitations of autonomous vehicles through intuitive user interfaces can help build trust. Companies are exploring augmented reality dashboards that inform passengers in real-time about the vehicle’s decisions, ultimately demystifying the technology.

As we continue to navigate the landscape of autonomous vehicles, the convergence of these innovations and solutions illustrates the industry’s commitment to overcoming obstacles and enhancing the role of computer vision in achieving truly self-sufficient transportation. The fusion of creativity and technology is key to visualizing an autonomous future that is not only efficient but also safe.

DIVE DEEPER: Click here to discover how

Conclusion

In summary, the integration of computer vision in autonomous vehicles represents a pivotal shift in the future of transportation. While challenges such as environmental variability, real-time processing demands, and regulatory uncertainty remain, the relentless spirit of innovation within the industry shines through. Advancements in deep learning algorithms, sensor fusion, and edge computing are not only refining the capabilities of autonomous systems but also ensuring their safety and efficiency on the road. As companies actively develop strategies like collaborative learning and implement advanced simulations, they are laying down the groundwork for a more reliable and resilient driving experience.

The importance of regulatory frameworks and enhanced human-vehicle interaction cannot be overstated. As regulators and stakeholders work together to create supportive environments, public trust in autonomous technology is likely to grow. This transformation requires transparency and continuous dialogue about the technology’s limitations and potentials. At the same time, augmenting real-time communication between vehicles and passengers fosters a deeper sense of security, encouraging wider acceptance of autonomous driving.

Looking forward, the implications of these innovations extend beyond mere transportation convenience. They hint at a smarter, more interconnected future where safety and mobility enhance urban living. As we stand at this crossroads, the ongoing evolution of computer vision is set to redefine our expectations and experiences on the road. Monitoring these developments closely will keep us informed as we inch closer to a fully autonomous reality that promises a new era in travel.